Ah, this is that Daenerys bot story again? It keeps making the rounds, always leaving out a lot of rather important information.

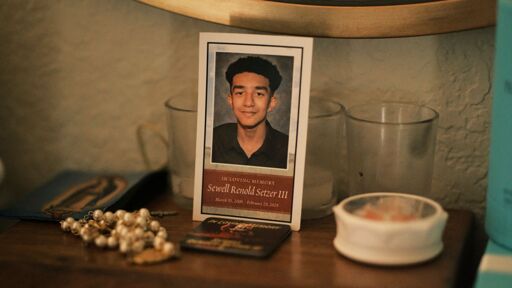

The bot actually talked him out of suicide multiple times. The kid was seriously disturbed and his parents were not paying the attention they should have been to his situation. The final chat before he committed suicide was very metaphorical, with the kid saying he wanted to “join” Daenerys in West World or wherever it is she lives, and the AI missed the metaphor and roleplayed Daenerys saying “sure, come on over” (because it’s a roleplaying bot and it’s doing its job).

This is like those journalists that ask ChatGPT “if you were a scary robot how would you exterminate humanity?” And ChatGPT says “well, poisonous gasses with traces of lead, I guess?” And the journalists go “gasp, scary robot!”

that additional context is super interesting, but it doesn’t take away from the fundamental reality which is that when someone opens up to you about suicidal ideation, it’s not acceptable to merely do your best to dissuade them; it’s critical to get them to help they need, and there’s just no way for a LLM to do that.

this individual is an outlier in that his personal outcome was spectacularly bad, but his story seems familiar to me. I know a lot of people who seem to feel like they’re building real relationships with these bots.

Not to mention the gun that was left in easy reach by his parents even after being told he was depressed.

according to the article it was hidden somewhere. not locked up or anything just hidden

What’s hidden mean? In a cupboard, because that isn’t hidden it’s just put away.

Anywhere besides a locked safe is irresponsible

You’re acting as if the bot had some sort of intention to help him. It’s a bot. It has zero intention whatsoever since it’s not a conscious entity. It is programmed to respond to an input. That’s it.

The larger picture here is that this technology is being used by people in a way that’s being used as if it were a conscious entity. Including the mentally ill. Which is very dangerous, and can drive people to action as we can see.

That’s not to say I have any idea how to handle this. Because I don’t have a clue. But it is a discussion that needs to be had rather than minimizing the situation as an “well the bot actually tried to talk him out of suicide”, because in my opinion that’s not the point. We are interacting with this technology in a way that is changing our own behavior and world view. And it is causing real world harm like this.

When we make something so believable as to trick people into thinking that they’re interacting with consciousness, that is a giant alarm we must discuss. Because at the end of the day, it’s a technology that can be owned, controlled, and manipulated by the owner class to serve their needs of maintaining power.

You’re acting as if the bot had some sort of intention to help him.

No I’m not. I’m describing what actually happened. It doesn’t matter what the bot’s “intentions” were.

The larger picture here is that these news articles are misrepresenting the vents they’re reporting on by omitting significant details.

The key issue seems to be people with poor mental health and/or critical thinking skills making poor decisions. The obvious answer would be to deal with their mental health or critical thinking issues, something which very few countries in the world are doing to any useful degree, but the US is doing worse than most developed countries.

Or we could regulate or ban AI. That seems easier.

The chatbot is the problem here

The parents weren’t paying attention to their obviously disturbed kid and they left a gun lying around for him to find. But sure, it was the chatbot that was the problem. Everything would have been perfectly fine forever without it.

Human talking to a human: “If you were going to kill someone, how would you do it?”

Human: “I consume a lot of True Crime stuff so I think I have a bit of an idea on how to get away with stuff, or at least some common blunders, why?”

Later

Tonight’s top story, local person claims they know how to get away with murder!

If he was falling in love with a chat bot he wasn’t happy.

When lawyer Meetali Jain found a call from Megan Garcia in her inbox in Seattle a couple of weeks later, she called back immediately. Jain works for the Tech Justice Law Project, a small nonprofit that focuses on the rights of users on the internet. "When Megan told me about her case, I also didn’t know anything about Character.AI,” Jain says in a video call. "Even though I work in this area, I had never heard of this app.” Jain has two children of her own, eight and 10 years of age. "I asked my son. He doesn’t even have a phone, but he had heard about it at school and through ads on YouTube that specifically target young users. And then I realized that these companies are experimenting with our children without our knowledge.”

…

the world needs to urgently integrate

- critical thinking

- media interpretation

- AI fundamentals

- applied statistics

courses into every school’s ciriculum starting from the age of ten to graduation, repeated yearly. Otherwise we are fucked.

Just teach kids that AI isn’t human and isn’t a replacement for humanity or human interaction of any kind.

It’s clippy with a ginormous database. It’s cold blooded.

Yes, I’m sure you’ll be able to convince kids that the new thing is bad because you say so, especially if you compare it to the antiquated mascot of a legacy word processor.

It’s not about it being bad. It’s about expectations and reality. It’s not human. Can’t replace human emotion and thought. Just process data and give analysis.

There is an emotional factor that goes into proper human decision making that is required. Or else half the human population would probably be suggested to be wiped out for some kind of cold, efficiency sake only a machine or psychopath can accept.

Same goes with something like suicide and mental health/human relationships. I don’t trust a machine’s judgment on that.

Spelling too.

Well this is terrifying. It really seems like there is little to no regulation protecting kids online these days.

Because all the laws that were pushed in the last twenty-five years for protecting children weren’t actually about protecting children

They’re all about increased conservative control over other people’s kids

And adults too. When you combine “the law says you can’t offer this service to children or we’ll destroy you” with “there’s no way to reliably tell if the people we’re offering this service to are children” the result is “guess we can’t offer this service to anyone.”

True. They start with the kids because they have no rights then expand once they have the foothold. We need to push back

That’s what parents are for.

Well, yes but stuff like chatbots, social media should be way better regulated.

Right now we see the equivalent of people selling drugs and guns freely in the streets (including to toddlers) and expect the parents to regulate all that.

Society is being actively eroded, while governments are fecklessly watching it happen.

Regulated how?

I’d have to write 2 PhD thesis’s about this to answer this one question properly.

Instead I’m just doing 2 examples and keep it shallow :

Th is case: A 14yo should not have completely unsupervised access to an ai chat bot - it needs to be by family/child account, same as for e.g. Fortnite. Also, given the nature of the matter and looking at the article: if the chat turns ’disturbing’ the parent needs to be made aware. (Etc etc)

Another case is TikTok: honestly, I’d just ban it together with shorts and reels. IMO this rots the brains of the younger generation. I’m not even sure there is a healthy way of consuming this type of content.

Okay. But by what mechanism would these things be enforced without encroaching on the privacy and freedoms of adults? It’s the same problems as policing porn or violent media. No one wants the government looking over their shoulder.

What exactly do you mean by ‘these things’?

Instead I’m just doing 2 examples and keep it shallow :

Th is case: A 14yo should not have completely unsupervised access to an ai chat bot - it needs to be by family/child account, same as for e.g. Fortnite. Also, given the nature of the matter and looking at the article: if the chat turns ’disturbing’ the parent needs to be made aware. (Etc etc)

Another case is TikTok: honestly, I’d just ban it together with shorts and reels. IMO this rots the brains of the younger generation. I’m not even sure there is a healthy way of consuming this type of content.

Only to a certain extent. What can they do against so many changes in the tech world. Just look at whatsapp that just introduced AI in their chat. There is a point when tech giants should just be strictly regulated for the interest of the public

Or how about parents regulate their children, so that we don’t have government nannies telling full grown adults what they’re allowed to do with chatbots?

It’s not about regulating what full grown adults do with chat bots it’s about regulating what corporations do with their products.

You don’t see how one leads directly to the other? Full grown adults are the users of those corporations’ products. If the corporations aren’t allowed to put certain features in those products then that’s the same as prohibiting their users from using those features.

Imagine if there was a government regulation that prohibited the sale of cars with red paint on them. They’re not prohibiting an individual person from owning a car with red paint, they’re not prohibiting individuals from painting their own cars red, but don’t you think that’ll make it a lot harder for individuals to get red cars if they want them?

What can they do against so many changes in the tech world.

Be involved in their kids’ lives? Tech isn’t the problem here, any more than it could have been TV, drugs, rock and roll, video games, D&D, or organized religion. Kids get into some dumb shit, just because it’s the hot new thing doesn’t make it any different.

Removed by mod

Lazy troll

Removed by mod

Lol, lmao

Removed by mod

Removed by mod